What is a Container?

- A container is simply like a software unit/wrapper that will package everything – your application code, app related dependencies etc. together.

- You can assume like you get a portable environment to easily run your application. You can easily manage the container on your own (operations like starting, stopping, monitoring etc.)

Why Kubernetes?

- Suppose, you have a requirement for running 10 different applications (microservices) ~ 10 containers.

- And in case you need to scale each application for high availability, you create 2 replicas for each app ~ 2 * 10 = 20 containers.

- Now you have to manage 20 containers.

- Would you be able to manage 20 containers on your own? (20 is just an example , there could be more based on the requirement). It would be difficult , for sure

Orchestration

- A Container Orchestration tool or framework can help you in such situations. It can help you automate all the deployment/management overhead.

- Once such Container Orchestration tool is Kubernetes

What is Kubernetes?

- Kubernetes is an open-source container orchestration platform that automates the deployment, scaling, and management of containerized applications.

- It provides a set of abstractions and APIs for managing containers, so you can focus on building your application and not worry about the underlying infrastructure.

- With Kubernetes, you can manage multiple containers across multiple machines, making it easier to streamline and automate the deployment and management of your application infrastructure.

- Kubernetes is fast becoming the de facto standard for container orchestration in the cloud-native ecosystem

Kubernetes Architecture

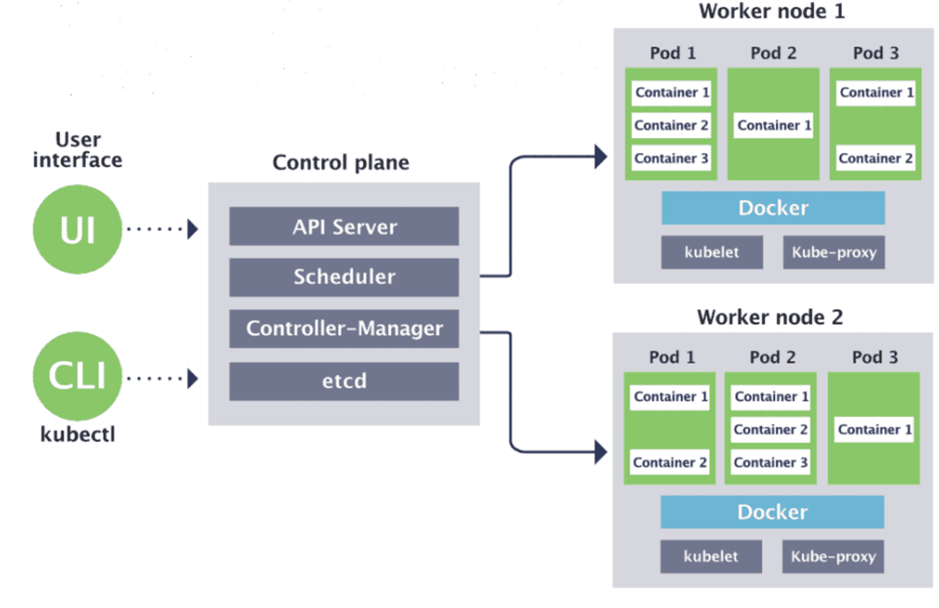

Control Plane :

This is the brain of the Kubernetes cluster and manages the overall state of the system. It includes –

- API Server: Provides a REST API for the Kubernetes control plane and handles requests from various Kubernetes components and external clients.

- etcd: This is a distributed key-value store that stores the configuration data of the entire Kubernetes cluster.

- Controller Manager: These components ensures that the desired state of the cluster is maintained by monitoring the state of various Kubernetes objects (e.g., ReplicaSets, Deployments, Services) and reconciling any differences.

- Scheduler: This component assigns Pods to worker nodes based on resource availability and other scheduling policies.

Worker Nodes :

These are the machines that run the application containers. Each worker node includes the following components:

- Kubelet: This component communicates with the API server to receive instructions and ensures that the containers are running correctly.

- Container Runtime: This is the software that runs the containerized applications (e.g., Docker, containerd).

- kube-proxy: This component handles network routing for services in the cluster.

Other Key Components

- Pod: A pod is the smallest deployable unit in Kubernetes and represents a single instance of a running process in the cluster. A pod can contain one or more containers.

- Container: A container is a lightweight, standalone executable package that contains everything needed to run an application, including code, runtime, system tools, and libraries.

- Service: A service is an abstraction that defines a set of pods and a policy for how to access them. Services provide a stable IP address and DNS name for a set of pods, allowing other parts of the application to access them. Other Key Components –

- ReplicaSet: It ensures that a specified number of replicas of a pod are running at all times. It takes care of auto scaling of the replicas based on demand. Deployment: A higher-level object that manages ReplicaSets and provides declarative updates to the pods and ReplicaSets in the cluster.

- ConfigMap: A configuration store that holds configuration data in key-value pairs.

- Secret: A secure way to store and manage sensitive information such as passwords, API keys, and certificates.

- Volume: A directory that is accessible to the containers running in a pod. Volumes can be used to store data or share files between containers.

What Is Kubernetes Used For: Main Features & Applications

- Kubernetes automates most tedious and time-consuming tasks that would otherwise have to be completed manually. Here are the features provided by Kubernetes that make container management easier:

- Automated rollouts/rollbacks: Kubernetes’ automatic rollout/rollback feature ensures that all instances of a given application are not killed simultaneously. If something goes wrong, Kubernetes will go back and undo the changes.

- Service discovery and load balancing: Without specifying IP addresses or configuring endpoints in advance, services can automatically learn about one another through a process called “service discovery.” Kubernetes can expose a container using the DNS name or their own IP address. If container traffic is heavy, Kubernetes can load balance and disperse network traffic to stabilize the deployment.

- Coordinating data storage: Kubernetes can integrate with all popular cloud providers and storage systems. It can automatically mount local storage, public cloud providers, and more. For example, NFS, iSCSI, Gluster, Ceph, Cinder, and Flocker are all supported as network storage systems that can be automatically mounted.

- Configuration and secret management: Using a Secret; you can avoid writing sensitive information into your application code. You can deploy and update secrets and application configuration without rebuilding your container images or exposing secrets in your stack configuration.

- Automatic bin packing: Kubernetes can make the best use of container resources (RAM, CPU, etc.) by automatically placing containers on your nodes based on your requirements. Furthermore, workloads are balanced between urgent and best-effort tasks so that all available resources can be used effectively.

- Batch execution: Kubernetes can manage batch and CI workloads and replace failed containers. It provides two workload resources: a Job object that creates and reruns on__e or more Pods until a certain number terminates successfully; and a CronJob object that allows you to set up repeating processes like backups and emails or schedule individual activities for a specific time.

- IPv4/IPv6 dual-stack: Kubernetes supports Dual-stack Pod networking (a single IPv4 and IPv6 address per Pod), IPv4 and IPv6 enabled Services, and Pod off-cluster egress routing through IPv4 and IPv6 interfaces.

- Self-healing: Kubernetes is an excellent way to track how containers, pods, and nodes are doing. If a container fails, Kubernetes will either restart it, replace it, or terminate it based on a user-defined health check, and it will keep it hidden until it is ready to serve.

- Horizontal scalability: Kubernetes lets users horizontally scale containers based on application needs, which may change. With horizontal scalability, when demand rises, more Pods are added to handle the extra workload. The Horizontal Pod Autoscaler can be used to accomplish it.

- Dynamic Volume Provisioning: The dynamic volume provisioning feature creates storage on-demand. Without dynamic provisioning, cluster admins must manually create new storage volumes and then represent them in Kubernetes using PersistentVolume Object. It eliminates pre-provisioning for cluster admins. Instead, it automatically provides storage as requested.

- DevSecOps & Security: Kubernetes makes it easier for DevOps to transition towards DevSecOps as users can configure and administer Kubernetes’ native security controls directly rather than depending on security operators’ security tools. These include network policies (used to restrict pod-to-pod traffic), role-based access control (used to establish user and service account responsibilities and privileges), and more.

Container engines and container runtime

A container engine is the software that oversees the container functions. The engine responds to user input by accessing the container image repository and loading the correct file to run the container. The container image is a file consisting of all the executable code you need to deploy the container again and again. It describes the container environment and contains the software necessary for the application to run.

When you use Kubernetes, kubelets interact with the engine to make sure the containers communicate, load images, and allocate resources correctly. A core component of the engine is the container runtime, which is largely responsible for running the container. While the Docker runtime was originally a standard solution, you can now use any OCI-compliant (Open Container Initiative) runtime.

What are the benefits of Kubernetes?

Kubernetes is a powerful tool that lets you run software in a cloud environment on a massive scale. If done right, it can boost productivity by making your applications more stable and efficient.

- Improved efficiency

Kubernetes automates self-healing, which saves dev teams time and massively reduces the risk of downtime. - More stable applications

With Kubernetes, you can have rolling software updates without downtime. - Future-proofed systems

As your system grows, you can scale both your software and the teams working on it because Kubernetes favors decoupled architectures. It can handle massive growth because it was designed to support large systems. Furthermore, all major cloud vendors support it, offering you more choice. - Potentially cheaper than the alternatives

It’s not suitable for small applications, but when it comes to large systems, it’s often the most cost-effective solution because it can automatically scale your operations. It also leads to high utilization, so you don’t end up paying for features you don’t use. Most of the tools in the K8s ecosystem are open-source and, therefore, free to use.

What are the disadvantages of Kubernetes?

As with all things, Kubernetes isn’t for everyone. It’s famously complex, which can feel daunting to developers who aren’t experts with infrastructure tech.

It may also be overkill for smaller applications. Kubernetes is best for huge-scale operations, rather than small websites.

Who Uses Kubernetes

Kubernetes is an excellent pick among emerging container architectures for adding cutting-edge features to the development of hardware and software design. Kubernetes’s flexible application support, which can lower hardware costs and lead to more efficient architecture, made businesses switch to it since its release. And many others will continue to do so. Here’s how some companies successfully used Kubernetes to solve their problems:

- Babylon: Built a self-service AI training platform on top of Kubernetes for their Medical AI Innovations.

- Adidas: Within a year, Adidas saw a 40% reduction in the time it needed to get a project up and running and integrated into its infrastructure.

- Squarespace: By implementing Kubernetes and updating its networking stack, Squarespace has cut the time it takes to deliver new features by approximately 85%.

- Nokia: Using Kubernetes in a Telecom Company to Enable 5G and DevOps Moving to cloud-native technologies making products infrastructure-agnostic.

- Spotify: An early container user, Spotify is migrating to Kubernetes, which provides greater agility, lower costs, and alignment with the rest of the industry on best practices.

Kubernetes vs. Docker

Docker is a platform for containerization, while Kubernetes is a platform for executing and managing containers from various container applications. It’s not a matter of “either,” “or,” but instead, they complement each other.

For example, when you design a containerized application using Docker, as your application grows and develops layered architecture, it may be challenging to keep up with each layer’s resource needs. However, Kubernetes can take care of these containers and automate system health and failure management.

Docker can run without Kubernetes; however, using it with Kubernetes improves your app’s availability and infrastructure. In addition, it increases app scalability; for example, if your app gets a lot of traffic and you need to scale out to improve user experience, you can add extra containers or nodes to your Kubernetes cluster.

Summary –

You can imagine Kubernetes as a classical ‘Master – Worker ‘ cluster setup. Master node has responsibilities to perform necessary processes to run/manage the cluster and the Worker nodes would actually run your applications.

So you basically instruct Kubernetes about the application ‘ s desired state and then it is responsibility of Kubernetes to achieve and maintain the state.

You need to use YAML or JSON manifest/config files to give the instruction. (for example, I want to run 3 different springboot applications each having 2 replicas on some specified ports. I would prepare the manifest files and give it to kubernetes and rest would be taken care.

Aw, this was a very nice post. In concept I want to put in writing like this additionally – taking time and precise effort to make a very good article… however what can I say… I procrastinate alot and not at all seem to get one thing done.